One of the most pressing issues facing companies in the payments industry is how they handle their data. Since financial transactions and services generate large amounts of data, financial institutions need to have a robust system in place to effectively process, analyze, and store the information.

Security is paramount, as the data is highly sensitive, and hackers are constantly trying their utmost to access it. And due to how valuable and sensitive the data is, companies must comply with a myriad of government regulations dictating acceptable practices. It’s essential that companies understand where their data comes from and how to maintain it properly.

To learn more about the importance of data governance, data management, and what solutions exist to help companies navigate the problems associated with data handling, PaymentsJournal sat down with Adwat Joshi, founder and CEO of DataSeers, and Tim Sloane, VP of Payments Innovation at Mercator Advisory Group.

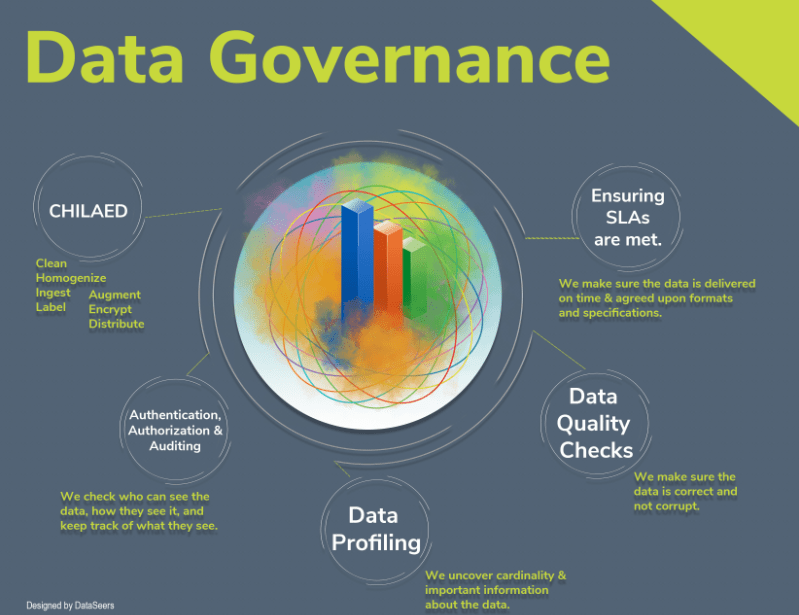

During the conversation, Joshi and Sloane discussed DataSeers’ five step approach to data governance, data management, data security, and what data quality means. They also covered encryption, and the intersection of encryption with authentication and authorization.

DataSeers’ approach to data governance

Data governance is a major question facing the payments industry. Regulators are increasingly scrutinizing how companies are governing their data and whether they are doing so correctly. Even without the scrutiny of regulators, solid data governance is vital for companies to be successful.

This is because bad data makes it challenging for companies to use it effectively. “If I don’t have what I need, and it’s not in a good format—it’s not right—then how can I ever do anything with it?” explained Joshi.

One element of DataSeers’ approach is data profiling, which entails reviewing the various data types to determine whether the data consists of numbers, text, or a mix of the two. “It gives you a clear understanding of who is sending what,” said Joshi, allowing companies to request that the data get cleaned before being accepted by that company.

DataSeers’ solution also enables authentication, authorization, and auditing. “We want to make sure that we authenticated anybody who is trying to access the data,” said Joshi. Authorization means that only people with proper permission to view data are allowed to do so. The last part, auditing, means that companies can keep track of who accessed the data, when, and which data specifically. This helps ward off against internal fraud.

Sloane noted that authentication, authorization, and auditing is more important than ever due to a variety of new regulations, including the E.U.’s General Data Protection Regulation (GDPR) and California’s data regulations.

Overall, DataSeers’ approach assumes that the data is coming in as a mess. Therefore, the company’s proprietary process is designed to clean the data, homogenize it, label it, enhance it, encrypt it, and allow for it to be seamlessly distributed. By taming the data, DataSeers enables companies to more effectively leverage it.

Master Data Management

Understanding and documenting where data is coming from, when it is coming, and what is coming is very important for companies. Being able to keep track of all these questions is known as master data management (MDM). Sloane likened MDM to housekeeping, pointing out that while MDM is often overlooked, making sure you know where everything is kept, if it is labeled, and who has access to what, is all essential.

Since it is such an integral part of many companies’ workflow, MDM is a very big industry, with many companies offering products which allow clients to better manage their data.

However, unlike a lot of solutions in the market, DataSeers’ approach is tailored made for the payments industry. “We understand what is required in order to have a better compliance platform, a better fraud platform,” and even a better reconciliation platform, said Joshi.

Data security

The next major aspect of effective data governance and management is data security.

“My definition of data security is the person who’s supposed to have access to the data at the very specific time is the only person, at that very specific time, who gets access to that very specific data,” offered Joshi. If the wrong person tries to access the data, or even the right person but at the wrong time, denying them access is key to data security.

Joshi provided a useful analogy of someone securing their house. If someone invested in a series of security tools, such as a camera, fancy lock, and expensive security service, yet forgot to lock the front door, all the fancy tools would be for naught. Similarly, “the basic thing you have to really think about is how well protected your data is at the source,” said Joshi.

DataSeers’ approach to securing data is unique, said Joshi. “We call it the submarine architecture.” It works by compartmentalizing the data, so if one section gets compromised, the rest of the data is safe, similar to how a submarine compartment can fill up with water, but the rest of the submarine remains safe. An end user faces multiple steps upon logging into the system and is ultimately unable to get all the way to the source.

Defining data quality and understanding its importance.

Assessing the quality of a data set is not a straightforward exercise. You cannot just assign an arbitrary number to data set, labeling its quality as 80, for instance, and have that make any sense. Instead, the quality of data is measured by your ability to act on it, said Joshi.

“If you are able to act on it with less noise and high accuracy, your data is potentially very good quality,” he said.

Sloane agreed, adding that the “quality of data is dependent upon the purpose that you’re going to apply the data to.”

To this end, DataSeers invented a quality index on scale of 1-100, which signals how actionable the data is in various situations. Depending on the specific situation and corresponding algorithm, each data element is weighted for how important it is.

“Encryption has to happen at multiple different levels”

Encryption is another important aspect of good data management. As Joshi noted, encryption is a broad term, which can refer to data that is encrypted to simply comply with PCI standards, or data that is encrypted on multiple levels. In the former case, the data is only encrypted at rest, which means it can still be vulnerable.

Therefore, “If you really want to do encryption, if you

really want to encrypt your data, data encryption has to happen at multiple

different levels,” said Joshi. DataSeers offers this level of encryption in its

latest release of software. “We are supporting even column level encryption,”

stated Joshi.

“We are trying to keep the data encrypted as much as possible.”

Such an approach allows users to access reports, but maybe not a specific column contained within, depending on who the user is and the column in question.

Sloane pointed out that encryption like this combines authentication and authorization, allowing people to view only what they’re supposed to view.

Joshi agreed, adding, “One of the things that we are implementing is everything that comes out of our platform will be encrypted in such a way that the only person who can decrypt it is going to be our clients.”

Approaches to data such as the one embraced by DataSeers provides companies with the platform necessary to get the most out of their data.